Deploy and Scale Inference Workflows with the Leader in Purpose-Built Solutions for AI

NeuReality is the first AI-centric solution specifically designed to democratize AI adoption.

Our multidisciplinary, system-level approach unlocks AI’s true potential by breaking traditional silos and solving the problems of workflow and process management.

These revolutionary tools enable the scale of real-life AI applications and accelerate the possibilities of human achievement.

The Benefits of AI-Centric Architecture

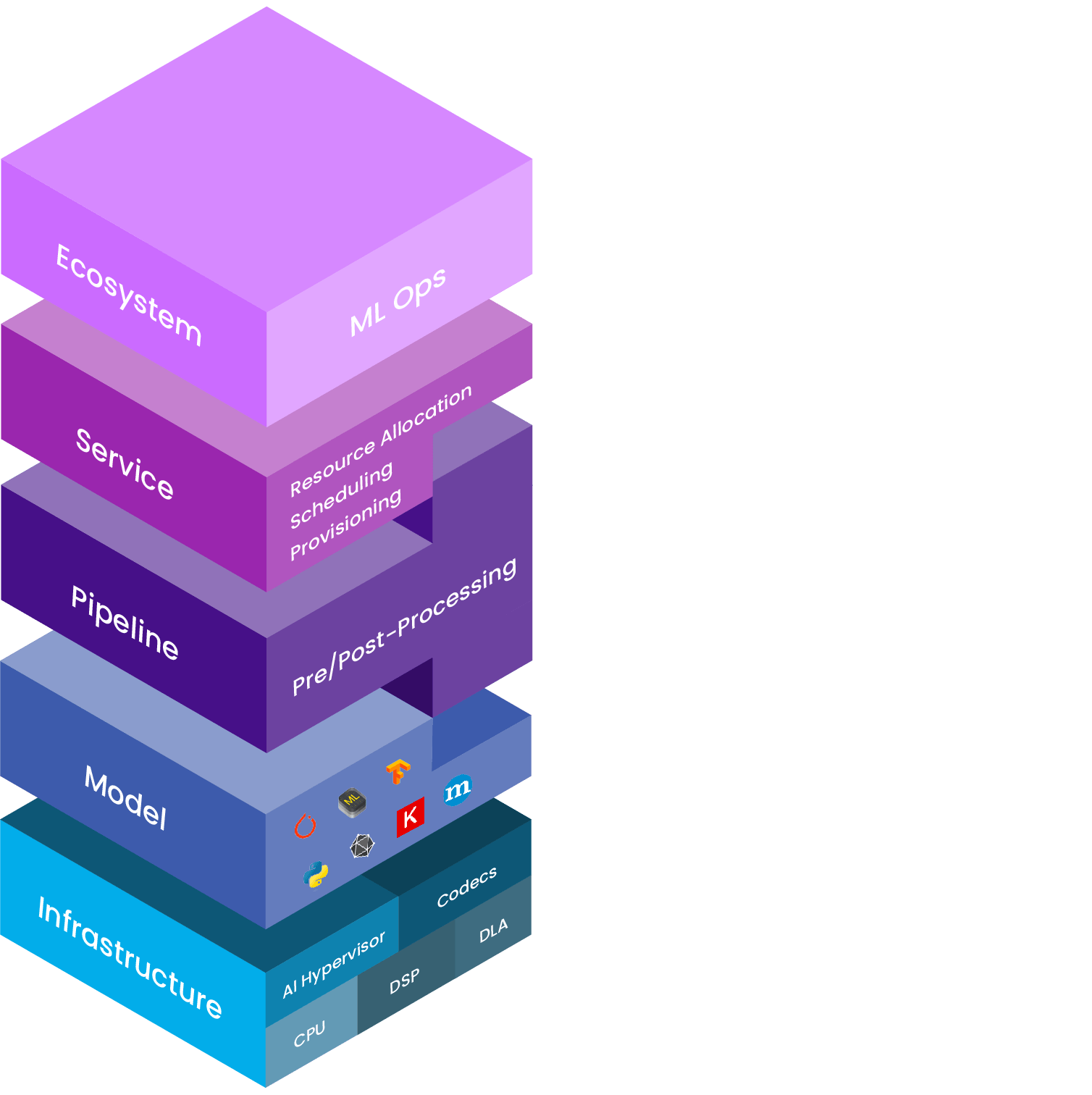

Our next-gen AI-centric architecture combines our NR1 Network Addressable Processing Unit (NAPU) with our inference-serving building blocks in software.

This approach reduces the dependency on CPUs, NICs, and PCI-switches, moving simple but critical data-path functions from software to hardware.

The Result

The result is an all-new, innovative AI-optimized system built from the ground up to enable ultra-scalability.

One-Stop Shop for AI Inference

Rather than spending valuable time and energy working with elements from different vendors, our integrated set of software tools combine all of the components into a single, high quality, UI/UX.

Once you’ve done AI this way, you’ll never go back!

NeuReality’s software tools provide all of the following described on the right:

Goodbye, bottlenecks.

Goodbye, bottlenecks.

Hello scalability.

Hello scalability.

Addressing barriers to AI adoption

Deploying a trained AI model is technically complex and requires multiple people with specialized skills. Deep Learning Accelerators are powerful—but they are trapped behind bottlenecks in the system.

Holistic solution for inference

Our solution, complete with purpose-built software and a first-of-a-kind network addressable inference server-on-a-chip, delivers better performance and scalability at lower cost and power.

How we make AI easy

With our unique network-connected approach and software integration tools, we make it easier to deploy, afford, use, and manage AI.

Visionaries who actually see.

Visionaries who actually see.

Specific Challenges, Specific Solutions

Instead of simply adding more and more DLAs to an already over-taxed and inefficient system, NeuReality’s team of innovators explored the root causes of AI’s current barriers— and solved for those specific challenges.

This approach provides full support of available AI frameworks, allowing high levels of flexibility to deploy seamlessly and adapt to any multi-tenant cloud environment or networking protocol.

The applications of this revolutionary infrastructure include a new server class, cloud-aware virtualized SDK coupled with a complete set of software tools, AI-Server-on-a-Chip NAPU, and the ultra-scalability that comes with this approach.